Workflow fit and measurable results is what will win the race.

Welcome to Babgverse — your insider guide to leveraging artificial intelligence to advance your career, improve content creation, and start or scale your business.

Last week, I had the chance to dive deep into the AI coding wars by running a head-to-head comparison between Claude Code and Cursor. After building real projects with both tools—a personal landing page modeled after Gary V's site and a new platform for ThinkPath—the results were both expected and surprising. What struck me most was how the choice between terminal-first and IDE-integrated approaches fundamentally changes how you think about AI-assisted development.

This comparison comes at a crucial time. With AI now generating 41% of all code, with 256 billion lines written in 2024 alone, and the global AI code tools market projected to grow from USD 4.86 billion in 2023 to USD 26.03 billion by 2030, at a CAGR of 27.1%, developers need practical guidance on which tools deliver real value.

Quick reminder: Maximize your AI development workflow with a strategic tool selection approach! While both Claude Code and Cursor offer powerful AI-assisted coding capabilities, our hands-on testing reveals the importance of matching tools to your specific development needs. Remember these key takeaways as you evaluate AI coding assistants:

Understand your workflow style: Are you terminal-focused or IDE-centric? Your natural development environment should guide your primary tool choice, not just feature comparisons or hype.

Test with real projects, not tutorials: Have you validated AI tools against your actual codebase complexity? Our testing with production-level projects revealed significant differences that simple demos never show.

Track true productivity metrics: When did you last measure actual completion time versus perceived speed? The gap between developer estimates (20% faster) and reality (19% slower in some studies) highlights the importance of objective measurement.

Now, let's dive into some key updates and then this week's big topic: how the battle between Claude Code and Cursor is reshaping the AI-assisted coding landscape and what it means for your development workflow….

WHAT’S CATCHING MY EYE THIS WEEK

After exploring the latest developments in AI-powered development, I've spotted three fascinating trends that are reshaping how we build software. Here's what you need to know 😎

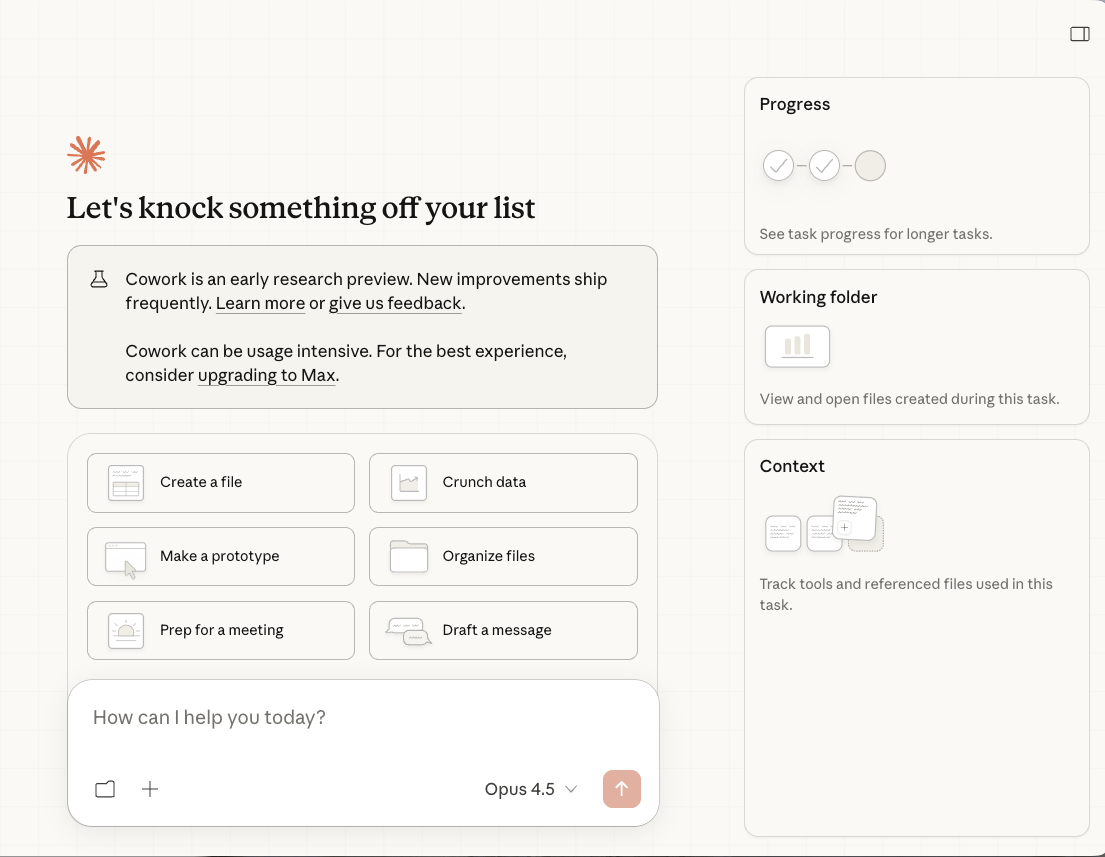

Claude Code Tasks: Background Agents Change Everything

Anthropic's Claude Code now supports background tasks that run autonomously while you focus on other work. This isn't just a feature update—it's a paradigm shift. Developers are reporting that they can kick off complex refactoring jobs, go to lunch, and return to find the work completed. The key innovation here is the ability to run multiple agents in parallel, each handling different aspects of a project. For businesses, this means dramatically reduced development cycles and the ability to tackle projects that previously required entire teams.

Agents That Run for Weeks: The New Normal

The latest AI agents can now operate continuously for extended periods, handling complex multi-step projects that span days or even weeks. Companies are deploying these agents for everything from codebase migrations to comprehensive testing suites. The economics are compelling: what once required a team of developers working for months can now be accomplished by a well-configured AI agent system in a fraction of the time. This isn't replacing developers—it's amplifying their capabilities exponentially.

The Rise of Agent Skills: Specialized AI Workers

A fascinating trend emerging is the development of specialized 'skills' for AI agents—pre-built capabilities that can be mixed and matched for specific tasks. Think of it like hiring specialists: one skill handles database migrations, another manages API integrations, and yet another focuses on security audits. This modular approach means you can assemble exactly the right AI team for your project without starting from scratch each time.

Claude Code vs Cursor: the Hands On Verdict

Want to pick the right AI coding tool for your workflow? Hands-on testing with both Claude Code and Cursor can help you build faster and ship better code.

Why This Matters For You:

Traditional tool comparisons focus on feature lists and benchmarks, creating lengthy evaluation cycles between reading reviews, testing demos, and making decisions. Real-world testing collapses this timeline from weeks to hours by building actual projects and measuring concrete outcomes simultaneously.

Before diving deeper, consider some compelling stats:

Developers using Claude Code for startup work show 32.9% higher usage than enterprise teams.

Teams reporting "considerable" productivity gains also see 3.5× improvements in code quality.

AI now generates 41% of all code, with the global market projected to reach USD 26.03 billion by 2030

💡 Real Implementation I’m Testing Right Now

II've built projects with both tools to understand their true capabilities:

With Cursor, I created a personal landing page modeled after Gary V's site. The workflow involved analyzing a screenshot, searching the web for current design patterns, and implementing changes through the IDE's visual interface. What used to require constant context switching between browser research and coding became seamless—one tool handled everything from design inspiration to implementation.

With Claude Code, I built the ThinkPath education platform landing page entirely through terminal commands. The autonomous nature meant less hand-holding but required more trust in the AI's decisions. Complex multi-file operations that would trip up other tools executed flawlessly, though the lack of visual feedback meant I couldn't preview changes as easily.

Here's the magic: What used to require choosing between speed and control now offers both—you just need to match the tool to your specific development style and project requirements.

Ready to choose your ideal tool? Here's a practical process to guide you:

Match Tool to Project Complexity: Large-scale refactoring across 18,000+ line files? Claude Code's autonomous operations shine here. Rapid prototyping with external resources and iterative design? Cursor's integrated approach wins.

Claude Code costs about 4× more per session but delivers higher accuracy and fewer revisions. Cursor is $20/month, making it budget-friendly for high-volume teams.

Establish Success Metrics: Define how you'll measure actual productivity—completion time, revision cycles, code quality—not just perceived speed. Remember: developers estimated 20% productivity gains while actual measurements showed 19% slowdowns in some contexts.

Plan Your Hybrid Strategy: Many advanced developers maximize efficiency by using both tools in tandem—for instance, leveraging Cursor for daily tasks and rapid prototyping where real-time visual feedback is helpful, and deploying Claude Code when tackling complex, autonomous codebase changes that benefit from minimal supervision. This combination lets you leverage the strengths of each platform based on project needs.

💎 Pro Tips You Won’t Hear Elsewhere:

Context Window Management: Claude Code excels at understanding entire codebases because it can autonomously process massive context windows. Cursor requires more intentional context feeding but offers better visual confirmation of what context it's using.

The Iteration Speed Paradox: Faster isn't always better. Teams using AI review alongside coding achieve 81% quality improvements, compared with 55% for teams focused purely on speed. Choose tools that encourage good practices, not just rapid output.

Multi-Tool Mastery: Rather than forcing yourself into one ecosystem, develop expertise in both. Use Cursor's strengths for client-facing work where visual iteration matters, and Claude Code's power for backend refactoring where autonomy trumps interaction.

⚡ Quick-Start Action Plan:

Terminal-focused developers should use Claude Code; IDE users should start with Cursor.

Try both on 2-3 real projects from your codebase before choosing.

Track real productivity: measure completion time, revision cycles, and code quality—not just speed.

Team experience: 32.9% of Claude Code users cited startups; 23.8% was enterprise.

Plan for the Hybrid Future: Both tools serve different use cases—budgeting for selective use of each may be optimal

Remember This:

AI coding tools are evolving quickly. With 70% of large enterprises past testing, choosing between Claude Code and Cursor is about future readiness, not just today’s features.

These tools reflect different AI philosophies. Pick based on your team’s needs, project, and budget.

If you're ready to leverage these tools strategically in your development workflow, book a consultation with us here: Babgverse to develop a customized AI coding strategy tailored to your specific needs!

Excited to see what you create! Drop me a reply if you build something awesome – I’d love to check it out and maybe even feature it in an upcoming newsletter! 🙌

Thanks for reading! 😊

Was this newsletter forwarded to you? Subscribe here